Jefferson on the two-dollar bill

In a list of the colonies’ grievances against King George III Jefferson wrote, “he has incited treasonable insurrections of our fellow-subjects, with the allurements of forfeiture and confiscation of our property.” But the future president, whose image now graces the two-dollar bill, must have realized right away that “fellow-subjects” was the language of monarchy, not democracy, because “while the ink was still wet” Jefferson took out “subjects” and put in “citizens.”

In a eureka moment, a document expert at the Library of Congress examining the rough draft late at night suddenly noticed that there seemed to be something written under the word citizens. It was no Da Vinci code or treasure map, but Jefferson’s original wording, soon uncovered using a technique called “hyperspectral imaging,” a kind of digital archeology that lets us view the different layers of a text. The rough draft of the Declaration was digitally photographed using different wavelengths of the visible and invisible spectrum. Comparing and blending the different images revealed the word that Jefferson wrote, then rubbed out and wrote over.

Above: Jefferson’s rough draft reads, “he has incited treasonable insurrections of our fellow-citizens”; followed by a detail of “fellow-citizens” with underwriting visible in ordinary light. Below: a series of hyperspectral images made by the Library of Congress showing that Jefferson’s initial impulse was to write “fellow-subjects.” [Hi-res images of the rough draft are available at the Library of Congress website.] Elsewhere in the draft Jefferson doesn’t hesitate to cross out and squeeze words and even whole lines in as necessary, but in this case he manages to fit his emendation neatly into the same space as the word it replaces.

0 Comments on Revising Our Freedom as of 1/1/1900

By: Rebecca,

on 4/2/2010

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

History,

book,

Literature,

Reference,

Blogs,

computer,

digital,

A-Featured,

Prose,

codex,

file,

Dennis Baron,

A Better Pencil,

scroll,

Add a tag

Dennis Baron is Professor of English and Linguistics at the  University of Illinois. His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at the difference between scrolls and codexes.

University of Illinois. His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at the difference between scrolls and codexes.

The scroll, whose pages are joined end-to-end in a long roll, is older than the codex, a writing technology — known more familiarly as the book — with pages bound together at one end. Websites have always looked more like scrolls than books, a nice retro touch for the ultra-modern digital word, but as e-readers grow in popularity, texts are once again looking more like books than scrolls. While the first online books, the kind digitized by the Gutenberg Project in the 1980s, consisted of one long, scrolling file, today’s electronic book takes as its model the conventional printed book that it hopes one day to replace.

Fans of the codex insist that it’s an information delivery system superior in every way to the scroll, and whether or not they approve of ebooks, they think that all books should take the form of codices. For one thing, book pages can have writing on both sides, making them more economical than scrolls, which are typically written on one side only (this particular codex advantage turns out to be irrelevant for ebooks). For another, the codex format makes it easier to compare text on different pages, or in different books, which some scholars think fosters objective, critical, or scientific thinking. It’s also easier to locate a particular section of a codex than to roll and unroll a scroll looking for something. These may or may not be advantages for books over scrolls, but it’s not a problem online, where keyword searching makes it easy to find digitized text in a nanosecond, regardless of its format, plus it’s possible to compare any online texts or the parts thereof simply by opening each in a different window and clicking from one to another. In the world of the ebook, codex or scroll becomes a preference, not an advantage.

A few tunnel-visioned readers associate the codex with Christianity, viewing scrolls as relics of heathen religion. Not to be outdone, some people see online books as messianic, and others think they represent the ultimate heresy — but religion aside, there’s no particular advantage for page over scroll in either the analog or the digital world. Finally, although this example of codex superiority is seldom mentioned, the codex can be turned into a flip book by drawing cartoons on the pages and then fanning them so the images appear to move. But then again, a motion picture is really a scroll full of pix unwinding at 24 frames per second. None of this makes a difference if your ebook, iPad, or smartphone won’t play Flash video.

There is one advantage of the book over the scroll that may apply to the computer. According to psychologists Christopher A. Sanchez and Jennifer Wiley, poor readers have more trouble understanding scrolled text on a computer than digital text presented in a format resembling the traditional printed page. But these researchers found that better readers, those with stronger working memories, understand scrolls and pages equally well.

While Sanchez and Wiley’s experiments suggest that for some readers, paging is better for comprehension than scrolling, their results are o

By: Rebecca,

on 3/22/2010

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Blogs,

research,

students,

A-Featured,

Dennis Baron,

internet,

Reference,

writing,

Education,

wikipedia,

Add a tag

Dennis Baron is Professor of English and Linguistics at the  University of Illinois. His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at Wikipedia.

University of Illinois. His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at Wikipedia.

Admit it, we all use Wikipedia. The collaborative online encyclopedia is often the first place we go when we want to know a fact, a date, a name, an event. We don’t even have to seek out Wikipedia: in many cases it’s the top site returned when we google that fact, date, name, or event. But as much as we’ve come to rely on it, Wikipedia is also the online source whose reliability we most often question or ridicule.

Wikipedia is the ultimate populist text, a massive database of more than 3.2 million English-language entries and 6-million-plus entries in other languages. Anyone can write a Wikipedia article, no experience necessary. Neither is knowing anything about the subject, since Wikipedians — you can be one too — can simply copy information from somewhere else on the internet and post it to Wikipedia. It doesn’t matter if the uploaded material is wrong: that can be fixed some other time. Wikipedia’s philosophy comes right out of the electronic frontier’s rough justice: write first, ask questions later.

When it comes to asking those questions, doing the fact-checking, Wikipedia depends on the kindness of strangers. Once an article on any topic is uploaded, anyone can read it, and any Wikipedian can revise or edit it. And then another Wikipedian can come along and revise or edit that revision, ad infinitum. Of course not every error is apparent, and not every Wikipedian will bother to correct an error even if they notice one. Wikipedians can even delete entries, if they find fault with them, but then other Wikipedians can decide to reinstate them.

Such sketchy reliability is why many teachers warn students not to use Wikipedia in their research. This despite the fact that a 2005 Nature study showed that, so far as a selection of biology articles was concerned, Wikipedia’s reliability was on a par with that of the Encyclopaedia Britannica. But teachers don’t want their students using the Britannica either. Wikipedia actually offers an article about its own reliability, though the accuracy of that article remains to be determined.

A study by researchers at the University of Washington finds that most students use Wikipedia, even though their instructors warn them not to. Not only that, but students in architecture, science, and engineering are the most likely to use Wikipedia. Apparently those students, whose disciplines depend on accurate measurements and verifiable evidence, don’t expect accuracy from their works cited. According to the study, students in the social sciences and humanities, subjects emphasizing argumentation and critical reading, are less-frequent users of Wikipedia. Unfortunately, the Washington researchers didn’t ask these students how much they rely on Spark Notes.

It turns out that students also distrust Wikipedia, though not with the same intensity their teachers do. Only 16% of students find Wikipedia articles credible. To

By: Rebecca,

on 3/16/2010

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Dennis Baron,

History,

Literature,

internet,

writing,

A-Featured,

Media,

english,

Prose,

tweet,

linguistics,

Add a tag

Dennis Baron is Professor of English and Linguistics at the  University of Illinois. His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at writing on the internet.

University of Illinois. His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at writing on the internet.

“Should everybody write?” That’s the question to ask when looking at the cyberjunk permeating the World Wide Web.

The earlier technologies of the pen, the printing press, and the typewriter, all expanded the authors club, whose members create text rather than just copying it. The computer has expanded opportunities for writers too, only faster, and in greater numbers. More writers means more ideas, more to read. What could be more democratic? More energizing and liberating?

But some critics find the glut of internet prose obnoxious, scary, even dangerous. They see too many people, with too little talent, writing about too many things.

Throughout the 5,000 year history of writing, the privilege of authorship was limited to the few: the best, the brightest, the luckiest, those with the right connections. But now, thanks to the computer and the internet, anyone can be a writer: all you need is a laptop, a Wi-Fi card, and a place to sit at Starbucks.

The internet allows writers to bypass the usual quality-controls set by reviewers, editors and publishers. Today’s authors don’t even need a diploma from the Famous Writers School. And they don’t need to wait for motivation. Instead of staring helplessly at a blank piece of paper the way writers used to, all they need is a keyboard and right away, they’ve got something to say.

You may not like all that writing, but somebody does. Because the other thing the internet gives writers is readers, whether it’s a nanoaudience of friends and family or a virally large set of FBFs, Tweeters, and subscribers to the blog feed. Apparently there are people online willing to read anything.

Previous writing technologies came in for much the same criticism as the internet: too many writers, too many bad ideas. Gutenberg began printing bibles in the 1450s, and by 1520 Martin Luther was objecting to the proliferation of books. Luther argued that readers need one good book to read repeatedly, not a lot of bad books to fill their heads with error. Each innovation in communication technology brought a new complaint. Henry David Thoreau, never at a loss for words, wrote that the telegraph – the 19th century’s internet – connected people who had nothing to say to one another. And Thomas Carlyle, a prolific writer himself, insisted that the explosion of reading matter made possible by the invention of the steam press in 1810 led to a sharp decline in the quality of what there was to read.

One way to keep good citizens and the faithful free from error and heresy is to limit who can write and what they can say. The road to publication has never been simple and direct. In 1501, Pope Alexander VI’s Bulla inter multiples required all printed works to be approved by a censor. During the English Renaissance, when literature flourished and even kings and queens wrote poetry, Shakespeare couldn’t put on a play without first getting a license. Censors were a kind of low-tech firewall, but just as there have always been censors, there have always been writers evading them and readers willing, or even anxious, to devour anything on the do-not-read list.

Today crit

By: Rebecca,

on 2/24/2010

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Media,

Leisure,

Revolution,

Dennis Baron,

A Better Pencil,

History,

internet,

Technology,

Blogs,

language,

digital,

A-Featured,

Add a tag

Dennis Baron is Professor of English and Linguistics at the  University of Illinois. His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at the success of the internet.

University of Illinois. His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at the success of the internet.

You’ve heard the Luddite gripes about the digital age: computers dehumanize us; text messages are destroying the language; Facebook replaces real friends with imaginary ones; instant messages and blogs give people a voice who have nothing to say. But now a new set of complaints is emerging, this time from computer scientists, internet pioneers who once promised that the digital revolution was the best thing since sliced bread, no, that it was even better, Sliced Bread 2.0.

It started in the mid-1990s with Clifford Stoll. You may remember Stoll as the Berkeley programmer who tracked down a ring of eastern European hackers who were breaking into secure military computers, and wrote up the adventure in the 1990 best-seller, The Cuckoo’s Egg. But a mere five years later Stoll published Silicon Snake Oil, a condemnation of the internet as oversold and underperforming. In a 1995 Newsweek op-ed, Stoll summed up the internet’s failed promise of happy telecommuters, online libraries, media-rich classrooms, virtual communities, and democratic governments in one word: “Baloney.”

More nuanced is the critique of Jaron Lanier, the programmer who brought us virtual reality, but who now labels life online “digital maoism.” In a recent interview in the Guardian, Lanier charged that after thirty years the great promise of a free and open internet has brought us not burgeoning communities of online musicians, artists, and writers, but “mediocre mush”; a pack mentality; recreations of things that were better done with older technologies; an occasional Unix upgrade; and an online encyclopedia. His conclusion: it’s all “pretty boring.”

And although internet guru Jonathan Zittrain praises the first personal computers and the early days of the internet for promoting unlimited creativity and exploration, he warns that the generative systems which enabled users to create new ways of being and communicating are giving way to tethered devices like smart phones, Kindles, Tivos, and iPads, all of which channel our communications and program our entertainment along safe and familiar paths and prohibit inventive tinkering. Zittrain reminds us that the PC was a blank slate, a true tabula rasa that let imaginative, technically-accomplished users repurpose it over and over again, but he fears that the internet appliance of the future will be little more than a hi-tech toaster programmed to let us do only what the marketing departments at Apple, Microsoft, Google, and Amazon want us to do.

It’s easy to ignore the Luddites. The internet isn’t destroying English (you’re reading this online, right?) or replacing face-to-face human interaction (Facebook or no Facebook, babies continue to be born). Plus, we’re all using computers and the ‘net, so how bad can they be?

But what about the informed critiques of experts like Stoll, Jaro

Dennis Baron is Professor of English and Linguistics at the University of Illinois.  His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at multitasking in a digital world.

His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at multitasking in a digital world.

Most of my students belong to the digital generation, so they consider themselves proficient multitaskers. They take notes in class, participate in discussion, text on their cell phones, and surf on their laptops, not sequentially but all at once. True, they’re not listening to their iPods in class, and they may find that inconvenient, since they like a soundtrack accompanying them as they go through life. But they’re taking advantage of every other technology they can cram into their backpacks. They claim it helps them learn, even if their parents and teachers are not convinced.

Too old to multitask? The author texting while writing on a laptop and listening to tunes.

Recently one of my students, a college senior, added to this panoply of technology an older form of classroom inattention: while I explored the niceties of English grammar, he was doing homework for another class. When I asked him to put away the homework and pay attention, he replied that he was paying attention, just multitasking to maximize efficiency. “I can multitask too,” I said, taking out my cell phone and starting to text as I went on with the lesson.

My students didn’t like this. They expected their teacher’s full attention, even if they weren’t going to give me theirs. Plus, they argued, “When you text, you have to stop talking so you can look at the keyboard. That’s not multitasking.” I was using a computer before most of them were born, but they were right, I can’t talk and text. Their pitying expressions said it all: too old to multitask. But what really got them was the thought that I might actually want to multitask, that I might be able to sneak in another activity while I was teaching them.

Although it’s gotten a lot of attention in the digital age, multitasking isn’t new, nor is it the sole property of the young. We commonly do two things at once — singing while playing an instrument, driving while talking to a passenger, surfing the web while watching TV. Despite the fact that a growing body of research suggests that multitasking decreases the efficiency with which we perform simultaneous activities, the idiom he can’t walk and chew gum at the same time shows that we expect a certain amount of multitasking to be normal, if not mandatory.

As for predigital, adult multitasking, office workers have been typing, answering phones, and listening to music, since, like, forever, without any loss of efficiency, except of course when Richard Nixon’s secretary, Rose Mary Woods, blamed the 18½ minute gap on one of the Watergate tapes on a multitasking mishap. Woods was listening to the tape and transcribing it when the phone rang. As she leaned

By: Rebecca,

on 11/11/2009

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

internet,

Technology,

Blogs,

Pencil,

digital,

A-Featured,

Media,

Online Resources,

Dennis Baron,

Philip Greenspun,

Add a tag

Dennis Baron is Professor of English and Linguistics at the University of Illinois. His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at the dilemma of being old in the internet age.

His book, A Better Pencil: Readers, Writers, and the Digital Revolution, looks at the evolution of communication technology, from pencils to pixels. In this post, also posted on Baron’s personal blog The Web of Language, he looks at the dilemma of being old in the internet age.

Philip Greenspun, an MIT software engineer and hi-tech guru, argues in a recent blog post that “technology reduces the value of old people.” It’s not that old people don’t do technology. On the contrary, many of them are heavy users of computers and cell phones. It’s that the young won’t bother tapping the knowledge of their elders because they can get so much more, so much faster, from Wikipedia and Google.

It was adults, not the young, who invented computers, programmed them, and created the internet. OK, maybe not old adults, in some cases maybe not even old-enough-to-buy-beer adults, but adults nonetheless. Plus, the over-35 set is Facebook’s fastest growing demographic.

Even so, despite starting the computer revolution, and despite their presence on the World Wide Web today, the old are fast becoming irrelevant. According to Greenspun, “An old person will know more than a young person, but can any person, young or old, know as much as Google and Wikipedia? Why would a young person ask an elder the answer to a fact question that can be solved authoritatively in 10 seconds with a Web search?”

Why indeed? With knowledge located deep in Google’s server farms instead of in the collective memories of senior citizens, the old today are fast becoming useless. Might as well put them out on the ice floe and let them float off to whatever comes next.

According to the federal government, which is never wrong about these things, I myself became officially old, and therefore useless as a repository of wisdom and memory, last Spring. But I’m not worried about being put out to sea on an ice floe, because thanks to global warming, the ice is melting so fast that it poses no danger. There’s not even enough ice out there to sink another Titanic, though if someone built a new Titanic people wouldn’t sail on it because it probably wouldn’t have free wi-fi.

I found out all I know about global warming and the shrinking ice caps and even the Titanic not from that well-known American elder, Al Gore, but from Wikipedia. Wikipedia also told me that Al Gore, who is no spring chicken, invented the internet. I learned from Google that there was no free wi-fi before the internet, and no such thing as a free lunch.

Socrates once warned that our increased reliance on writing would weaken human memory — everything we’d need to remember would be stored in documents, not brain cells, so instead of remembering stuff, we could just look it up. Socrates knew all about brain cells, of course, because he looked that up in a Greek encyclopedia (he didn’t use the Encyclopaedia Britannica, because he couldn’t read English). And just as he predicted, Socrates, who was no spring chicken, had to look up brain cells again a week later, because he forgot what it said.

2,400 years have passed since Socrates drank hemlock — that was his fellow Athenians’ way of putting an irrelevant old man out to sea — but it looks like our current dependence on computers is rendering old people’s memories irrelevant once again. And that’s probably a good thing, because as Socrates learned the hard way, old people’s memories are notoriously unreliable, which is why Al Gore, who foresaw that this would happen, also invented sticky notes.

David’s “The Death of Socrates.” We remember the Greek philosopher’s critique of writing because his student Plato wrote it down on sticky notes.

David’s “The Death of Socrates.” We remember the Greek philosopher’s critique of writing because his student Plato wrote it down on sticky notes.

Like old people, old elephants are also no longer necessary. Elephants became an endangered species not because hunters killed them for the ivory in their tusks but because now that we have computers, no one cared that an elephant never forgets. Technology reduced the value of elephants, and so the elephants just wandered off to the elephants’ burial ground to wait for whatever comes next. And also because the elephants’ burial ground has free wi-fi.

Unlike elephants and people, computers never forget, so we can rest assured that the value of computers will never be reduced. Unlike fallible life-form-based memory banks, computers preserve their information forever, regardless of disk crashes, magnetic fields, coffee spills on keyboards, or inept users who accidentally erase an important file.

And there’s no need to throw out your 5.25″ floppies, laser disks, minidisks, Betamax, 8-track, flash drives, or DVDs just because some new digital medium becomes popular, because unlike writing on clay, stone, silk, papyrus, vellum, parchment, newsprint, or 100% rag bond paper, all computerized information is always forward-compatible with any upgrades or innovations that come along.

Plus all the information stored in computer clouds is totally reliable and always available, except of course for those pesky T-Mobile Sidekick phones whose data somehow disappeared. Assuming the cable’s not down, Google invariably shows us exactly what we’re looking for, or something that’s at least close enough to it, and Wikipedia is never wrong, ever. That’s because the information on Google and Wikipedia is put there by robots, or maybe intelligent life forms from outer space, not by people of a certain age who have to write stuff down on stickies, just as Socrates did, so they don’t forget it.

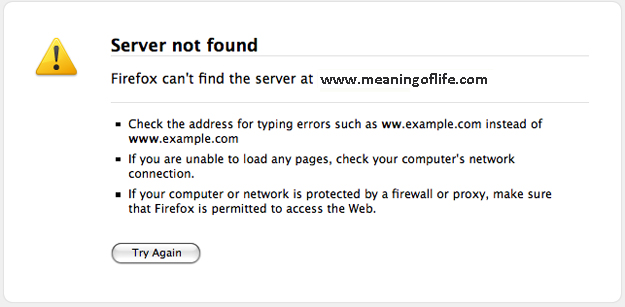

And now that I don’t have to remember all that lore that elders were once responsible for, my brain cells have been freed up to do other important stuff, like spending lots more time online looking for the meaning of life and what comes next, assuming there’s free wi-fi at the coffee shop.

“tall, grande, venti” that has invaded our discourse. But highly-paid consultants, not minimum-wage coffee slingers, created those terms (you won’t find a grande or a venti in Italian coffee bars). Consultants also told “Starbuck’s” to omit the apostrophe from its corporate name and to call its workers baristas, not coffee-jerks.

“tall, grande, venti” that has invaded our discourse. But highly-paid consultants, not minimum-wage coffee slingers, created those terms (you won’t find a grande or a venti in Italian coffee bars). Consultants also told “Starbuck’s” to omit the apostrophe from its corporate name and to call its workers baristas, not coffee-jerks.

University of Illinois. His book,

University of Illinois. His book,

David’s “The Death of Socrates.” We remember the Greek philosopher’s critique of writing because his student Plato wrote it down on sticky notes.

David’s “The Death of Socrates.” We remember the Greek philosopher’s critique of writing because his student Plato wrote it down on sticky notes.